- An incredibly intelligent donor, perhaps from outer space, has prepared two boxes for you: a big one and a small one. The small one (which might as well be transparent) contains $1,000. The big one contains either $1,000,000 or nothing. You have a choice between accepting both boxes or just the big box. It seems obvious that you should accept both boxes (because that gives you an extra $1,000 irrespective of the content of the big box), but here’s the catch: The donor has tried to predict whether you will pick one box or two boxes. If the prediction is that you pick just the big box, then it contains $1,000,000, whereas if the prediction is that you pick both boxes, then the big box is empty. The donor has exposed a large number of people before you to the same experiment and predicted correctly [every] time, regardless of whether subjects chose one box or two.1

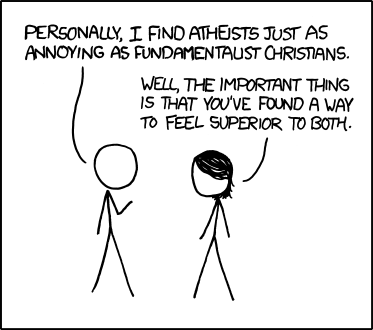

- To almost everyone, it is perfectly clear and obvious what should be done. The difficulty is that these people seem to divide almost evenly on the problem, with large numbers thinking that the opposing half is just being silly.2

- I can give you my own attempt at a resolution, which has helped me to be an intellectually fulfilled one-boxer. [Quantum Computing since Democritus, s 296]

- Now let's get back to the earlier question of how powerful a computer the Predictor has. Here's you, and here's the Predictor's computer. Now, you could base your decision to pick one or two boxes on anything you want. You could just dredge up some childhood memory and count the letters in the name of your first-grade teacher or something and based on that, choose whether to take one or two boxes. In order to make its prediction, therefore, the Predictor has to know absolutely everything about you. It's not possible to state a priori what aspects of you are going to be relevant in making the decision. To me, that seems to indicate that the Predictor has to solve what one might call a "you-complete" problem. In other words, it seems the Predictor needs to run a simulation of you that's so accurate it would essentially bring into existence another copy of you.

Let's play with that assumption. Suppose that's the case, and that now you're pondering whether to take one box or two boxes. You say, "all right, two boxes sounds really good to me because that's another $1,000." But here's the problem: when you're pondering this, you have no way of knowing whether you're the "real" you, or just a simulation running in the Predictor's computer. If you're the simulation, and you choose both boxes, then that actually is going to affect the box contents: it will cause the Predictor not to put the million dollars in the box. And that's why you should take just the one box. [Quantum Computing since Democritus, s 296-297]